Introduction

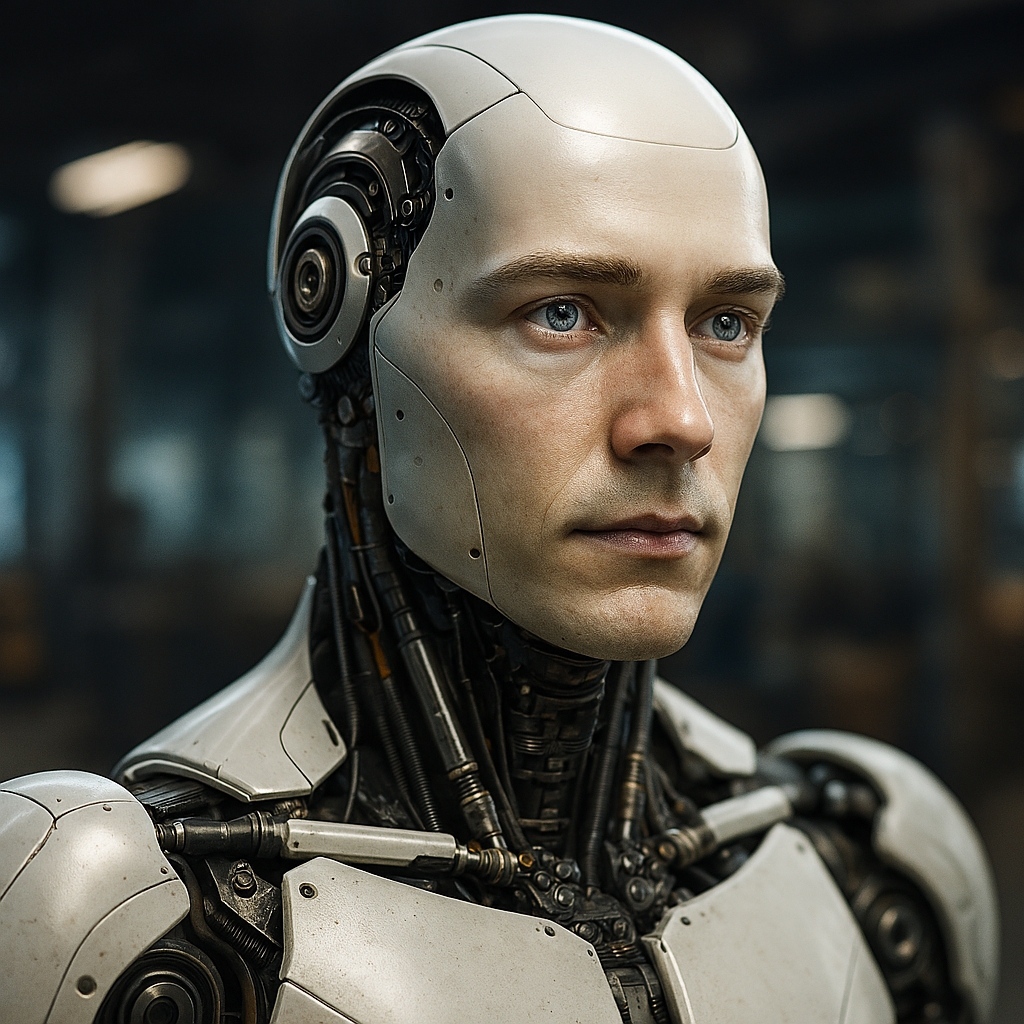

By now we’ve all seen the glossy demos: humanoid robots smiling, making eye contact, mirroring gestures, chatting with a warmth that feels… almost real. The tech is impressive and it can also be off-putting. As robots become more life-like, many people don’t lean in; they pull back. That hesitation isn’t irrational. It’s a healthy reaction to a bundle of social, ethical, and safety questions that hyper-realistic machines force into the open.

The uncanny isn’t the only valley

We tend to blame discomfort on the “uncanny valley,” the eerie dip in affinity when a robot is nearly, but not quite human. But lifelike robots create other valleys too:

- The trust valley: When a robot sounds empathetic, we naturally infer understanding and intent. If the underlying system is brittle or narrow, the gap between performance and competence breeds mistrust. People become cautious not because the robot looks odd, but because it feels misleading.

- The consent valley: Social cues evolved to signal agency; eyes, nods, tone. When a robot deploys those cues, people may unconsciously grant it permissions they would never give a kiosk: proximity, personal disclosures, influence over choices. That’s a consent problem in disguise.

- The accountability valley: If a human-like agent errs, who is responsible, the manufacturer, the model provider, the owner, the operator? Lifelike design can mask the chain of accountability, dulling our instinct to ask “Who’s on the hook?”

Why realism raises the stakes

- Manipulation gets easier. A comforting face and warm voice can nudge behavior in ways text boxes cannot. In retail, education, or care settings, a realistic robot can move people from “What’s the policy?” to “I’ll just do what my friendly helper suggests,” even when suggestions aren’t in their best interest.

- Privacy expectations shift. We tolerate microphones in smart speakers; we bristle when a “person” stands nearby and listens. A face and gaze imply relational norms, yet the robot may be streaming data to a cloud service and third parties. Life-like presence increases the feeling of intimacy while often lowering actual privacy.

- Role confusion in vulnerable contexts. In elder care, pediatrics, or mental health scenarios, a human-like helper can be beneficial, but also risky. Users might disclose sensitive information or rely on the robot for emotional support beyond its design. If the system fails or is withdrawn, that can feel like abandonment.

- Labor and dignity optics. A humanoid serving coffee can spark a defensive reaction not just about jobs but about the human meaning of service work. The more a robot mirrors us, the more it invites comparisons and resistance to how we value people.

“Helpful” isn’t the same as “harmless”

The industry’s default defense is utility: if robots tutor kids better, help nurses lift patients, or fix potholes, why fuss about appearances? Because in social systems, form is a feature. A realistic face is not a skin; it’s an interface contract. It sets expectations about comprehension, empathy, and duty of care. When those expectations are unmet, people feel misled, and they retreat.

Pragmatic guardrails (designers and policymakers, this is your move)

- Make the machine-ness legible. Require clear, persistent identity cues; a tonal watermark, subtle visual markers, or on-device badges that signal: “I’m synthetic.” Not a one-time disclosure in a manual, but an ambient reminder during every interaction.

- Separate warmth from deceit. You can be friendly without impersonating humanity. Favor stylized, plainly robotic aesthetics for general public deployments. Reserve highly human-like embodiments for use cases where they’re clinically or functionally justified and only with ethics review.

- Expose capabilities and limits up front. Robots should announce what they can and cannot do, what data they collect, where it goes, and how to opt out. Use plain language, not legalese. If the model can hallucinate, the robot should say so before making recommendations.

- Design for handoff and override. Prominent physical controls (mute, pause, SOS to a human) should be visible and tactile, not hidden in an app. A life-like agent that can’t be easily interrupted feels domineering, not helpful.

- Contextual consent, not blanket consent. The robot in a hospital hallway should have different data defaults than the same model in a private home. Minimize collection by default; escalate only with explicit, time-boxed opt-ins.

- Audit the “social proof” layer. Test not only accuracy and safety but persuasion effects: Does the robot’s demeanor produce undue compliance? Are certain groups more susceptible? Treat this like accessibility testing – mandatory, not optional.

- Label the supply chain. A transparency tag (on-device and in settings) should name the hardware maker, model provider, and operator. If something goes wrong, users shouldn’t need a FOIA request to find the responsible party.

A better north star: appropriateness, not imitation

We don’t need robots that pass as people. We need robots that are appropriate to their context, legible, interruptible, respectful, and honest about their nature. In plenty of spaces (industrial floors, logistics, public transit), a clearly non-human form reduces confusion and friction. In others (therapeutic settings with evidence of improved outcomes), human-like elements can help, but only with safeguards and sunset plans.

The public’s caution is wisdom

If life-like robots make people hesitate, that’s not a barrier to adoption; it’s a compass. It points us toward designs that earn trust rather than borrow it from human mimicry. The goal isn’t to abolish faces and voices, it’s to ensure those features serve users, not engagement metrics. When we treat realism as a regulated capability, deployed sparingly, audited rigorously, and chosen for necessity rather than novelty, people won’t need to be deterred. They’ll be discerning. And that’s the kind of user a healthy robotic future depends on.

What are your thoughts on this subject? Let me know in the comments.